The 150-Pound Computer

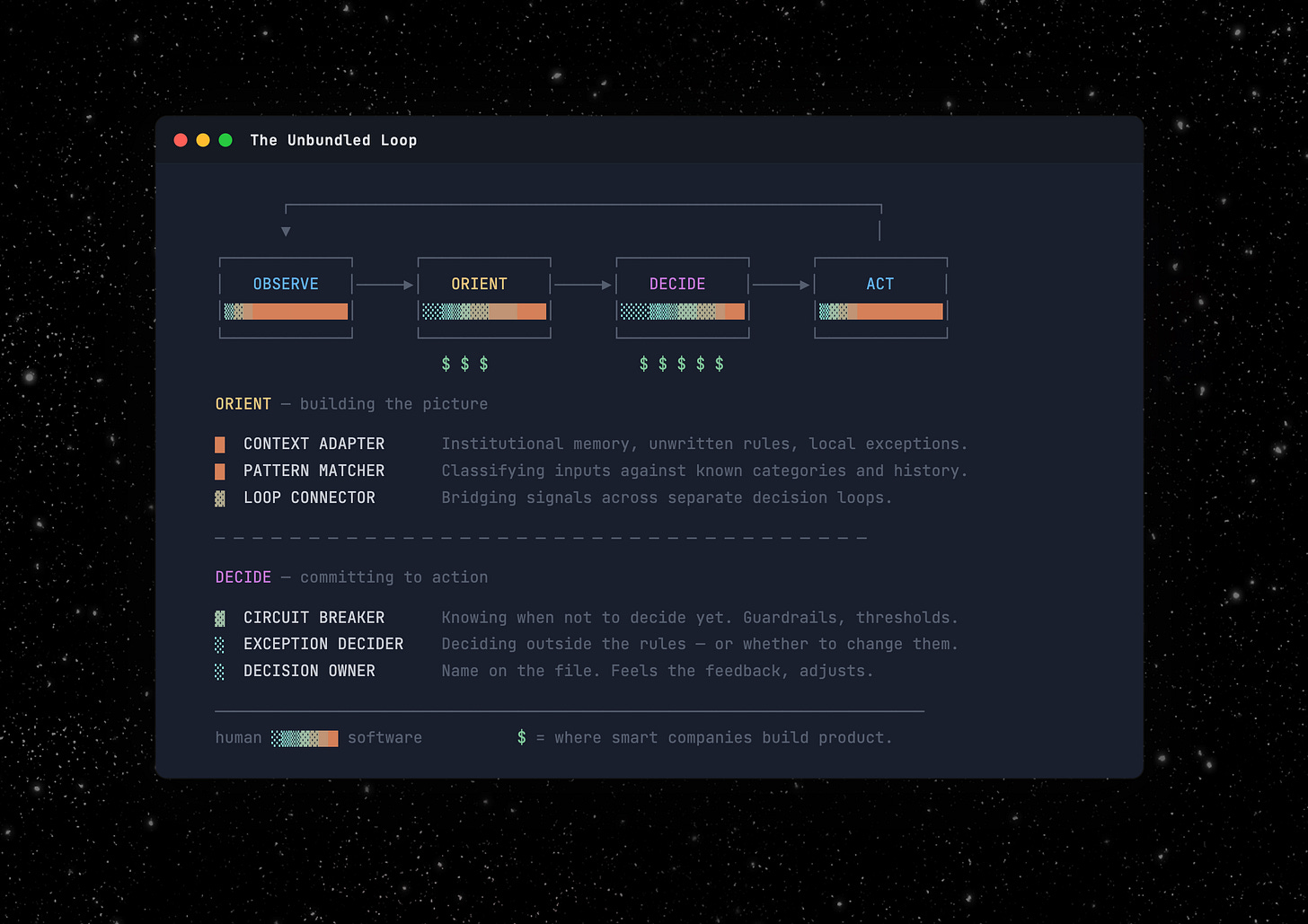

Every job is an OODA loop. AI doesn't replace the loop, but unbundles it into sub-functions. The most valuable ones are irreducibly human, and the smart companies are building for that.

For decades, this was the operating assumption. Humans were the cheapest general-purpose computers available. Hire them, give them tools, let them process information and make decisions.

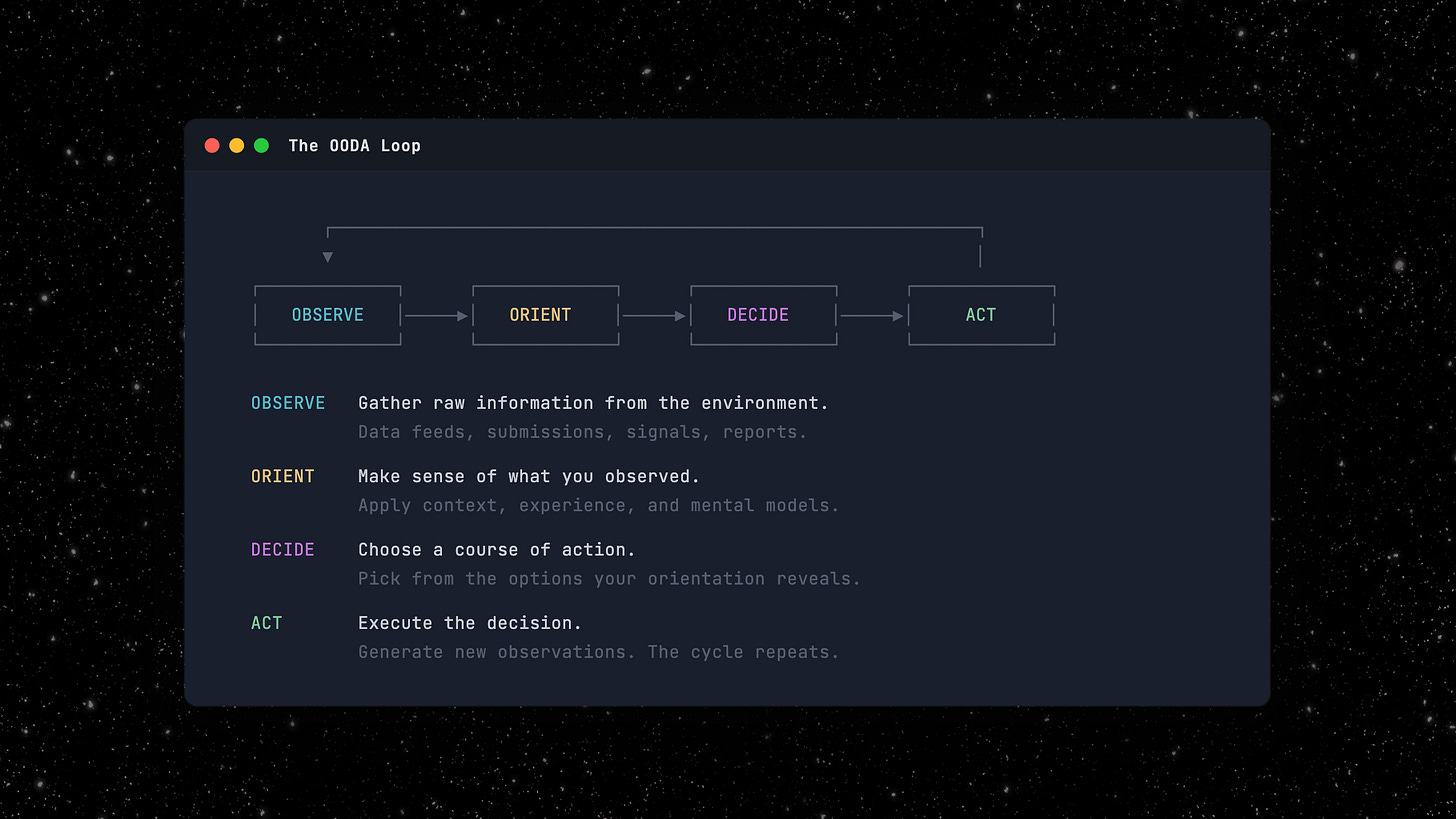

In the 1970s, an Air Force Colonel named John Boyd formalized what that 150-pound computer does. He called it the OODA loop: Observe, Orient, Decide, Act. A decision cycle built for fighter pilots. Cycle faster than your opponent and you win. It proved far more universal than Boyd intended.1

Every time you hire someone and hand them a set of tools and a set of problems, you’re paying a 150-pound computer to run an OODA loop.

The OODA loop is everywhere. But let’s take insurance underwriting as an example. Submissions land on an underwriter’s desk. She reads the submission, matches it against her carrier’s appetite, decides whether to bind or decline, and issues the quote. Observe, Orient, Decide, Act. Dozens of times a week.

Software ate the edges. AI enters the middle.

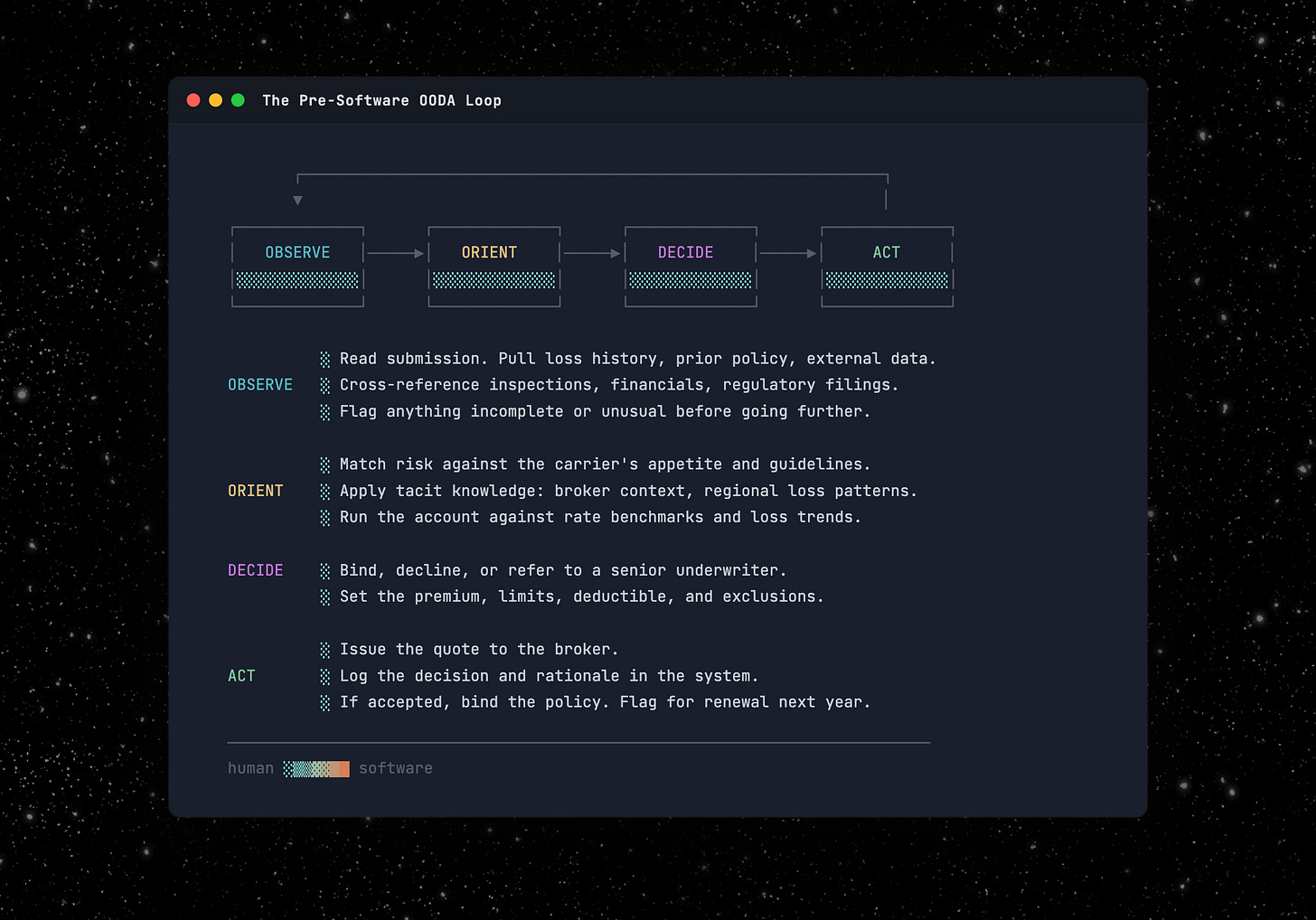

Software made parts of that loop faster, particularly Observe and Act. Data warehouses and submission intake tools on the Observe side. Policy admin systems and workflow automation on the Act side. Rating engines and risk scores nibbled at Orient and Decide, but the core of both (judgment, context, the actual call) stayed human. You can only cycle as fast as the slowest step allows.

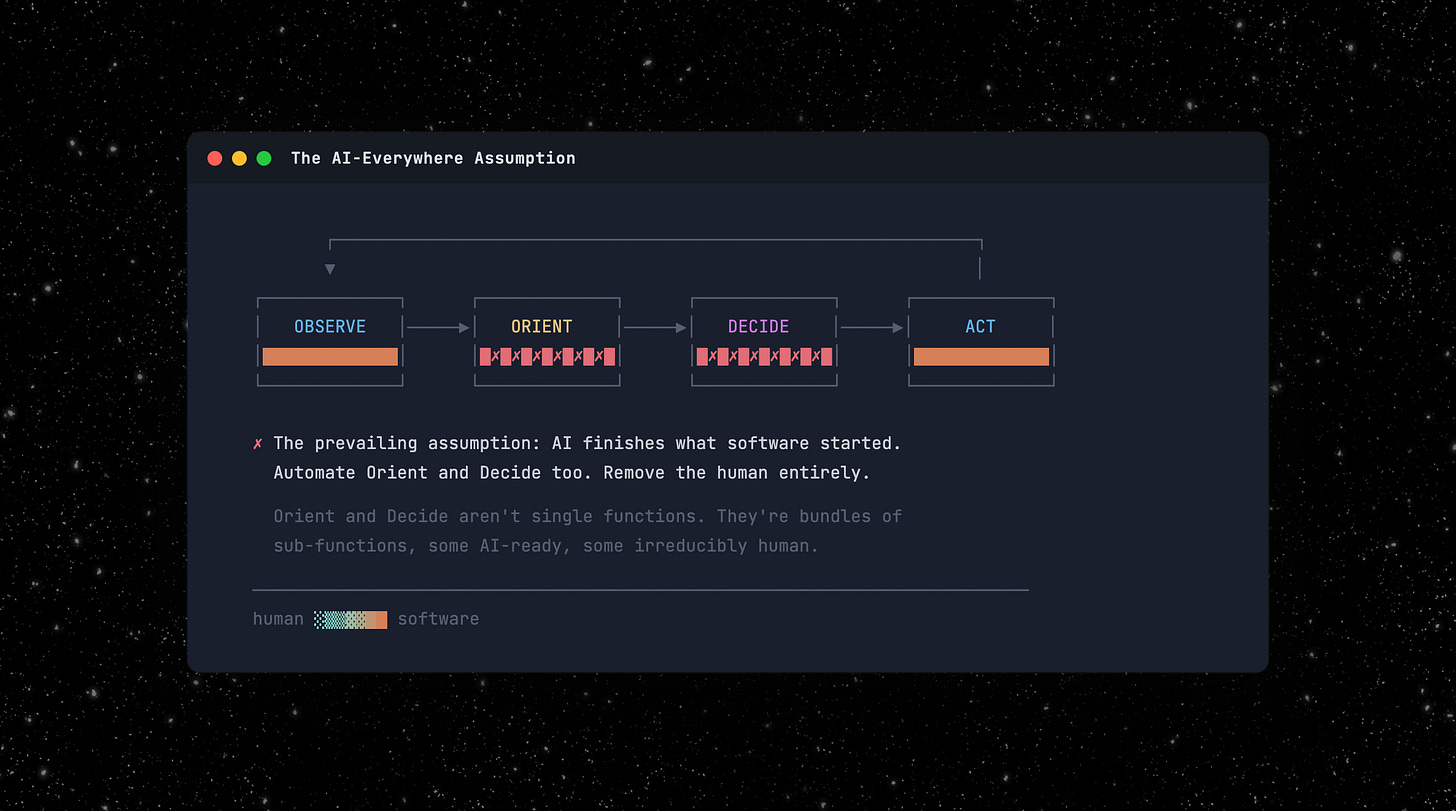

Now AI enters the middle of the loop. The prevailing assumption is that it completes the job software started: automate the whole cycle, remove the human entirely.

For some roles, that’s right. But for most of the interesting ones, it’s a fundamental misunderstanding of what the human was actually doing in Orient and Decide.

Orient and Decide are bundles, not functions

The underwriter wasn’t performing one function. Orient and Decide are each bundles of distinct sub-functions, packaged into a single role because they came free with every hire. We never had to decompose the bundle because there was no reason to. Now there is.

AI absorbs some of these functions today, will absorb others as it matures, and will probably never absorb the rest. Automate the wrong ones and you’ve built a system nobody trusts. Leave the wrong ones to humans and you’re paying for work a model should handle. Here are some of the critical parts of those bundles:

Orient: building the picture

The pattern matcher. Recognizing what kind of thing you’re looking at by matching against known categories. Enough examples exist that AI classifies faster and more consistently than any human. In underwriting, this can mean reading a submission and recognizing it as a standard mid-market GL risk the carrier has seen ten thousand times. Once matched, guidelines prescribe the response. This is the first function AI absorbs end to end.

The context adapter. Every organization accumulates unwritten rules and institutional knowledge that never make it into any system. This function translates that tacit knowledge into judgment on a specific case. In underwriting: knowing that a contractor class is fine in Texas but toxic in Florida, or that this agency’s “preferred” submissions mean the agent’s brother-in-law. LLMs with access to historical data already do this better than any individual. They don’t forget, and they don’t walk out the door with ten years of institutional knowledge.

The loop connector. Bridging decision loops the org chart never connected, i.e., someone in one process notices a signal that matters to a different process. The underwriter who flags a claims trend to the actuarial team. The valuable part isn’t the communication, but noticing it was relevant to someone else’s problem. AI today runs one loop well. Connecting loops means recognizing what matters outside your own process. This is still largely unsolved and enormously valuable.

Decide: committing to action

The circuit breaker. Human slowness was a feature. The underwriter who sleeps on a borderline case isn’t necessarily being slow, but running a second, slower loop. AI doesn’t have that instinct. The hard problem isn’t implementing confidence thresholds. It’s knowing which decisions need the overnight hold and which don’t. Fraud detection should run at machine speed, while underwriting authority on a novel risk class probably shouldn’t. Choosing the right clock speed is itself a judgment call about the nature of the decision.

The exception decider. The case that doesn’t fit the guidelines: not deciding within the rules but whether to change them. In underwriting: two carriers with identical models and data diverge entirely based on how they handle exceptions, because that’s where underwriting philosophy lives. AI optimizes for the objective you give it. It doesn’t question the objective.

The decision owner. Someone’s name is on the file, not just for blame, but for iteration: the person who feels feedback from outcomes and adjusts. Regulators require a human in the chain, reinsurers demand it, etc. This isn’t a cognitive limitation. It’s a structural requirement that changes at the speed of law, not tech.

These aren’t the only functions in the bundle, but they illustrate the gradient. AI is already better at the first two. It will likely absorb the third and fourth as agentic systems mature. The last two are probably irreducible. Not because AI can’t get smart enough, but because exception-deciding is an act of authority, and decision-ownership is a social and legal prerequisite for the transaction to exist.

The same decomposition applies wherever humans orient and decide at scale. In contract law, AI agents already draft standard redlines and learn each client’s preferences, but “commercially reasonable” and “reasonable” look identical to a model and mean entirely different things in court. The lawyer decides whether to push back, and the lawyer’s name is on the opinion.

In procurement, an autopilot benchmarks supplier pricing and handles standard reorders. But staying with a vendor at 20% above market because they alone can deliver by your launch date? That’s exception-deciding. And when that vendor misses a delivery, the relationship that resolves it is human. The labels change but the decomposition doesn’t.

Where value accrues

If your product’s primary value is executing routine decisions, you’re competing with every AI wrapper that can ingest inputs and produce outputs. The moat moves in two directions.

Down to the data layer: the system that has seen ten years of submissions, outcomes, and exception decisions has a better-oriented model than any new entrant. That advantage compounds with every cycle.

Up to the exception-handling layer: the product that makes the exception decider and the decision owner more effective (better context, tighter feedback loops, smarter routing) owns the part of the workflow that can’t be commoditized.

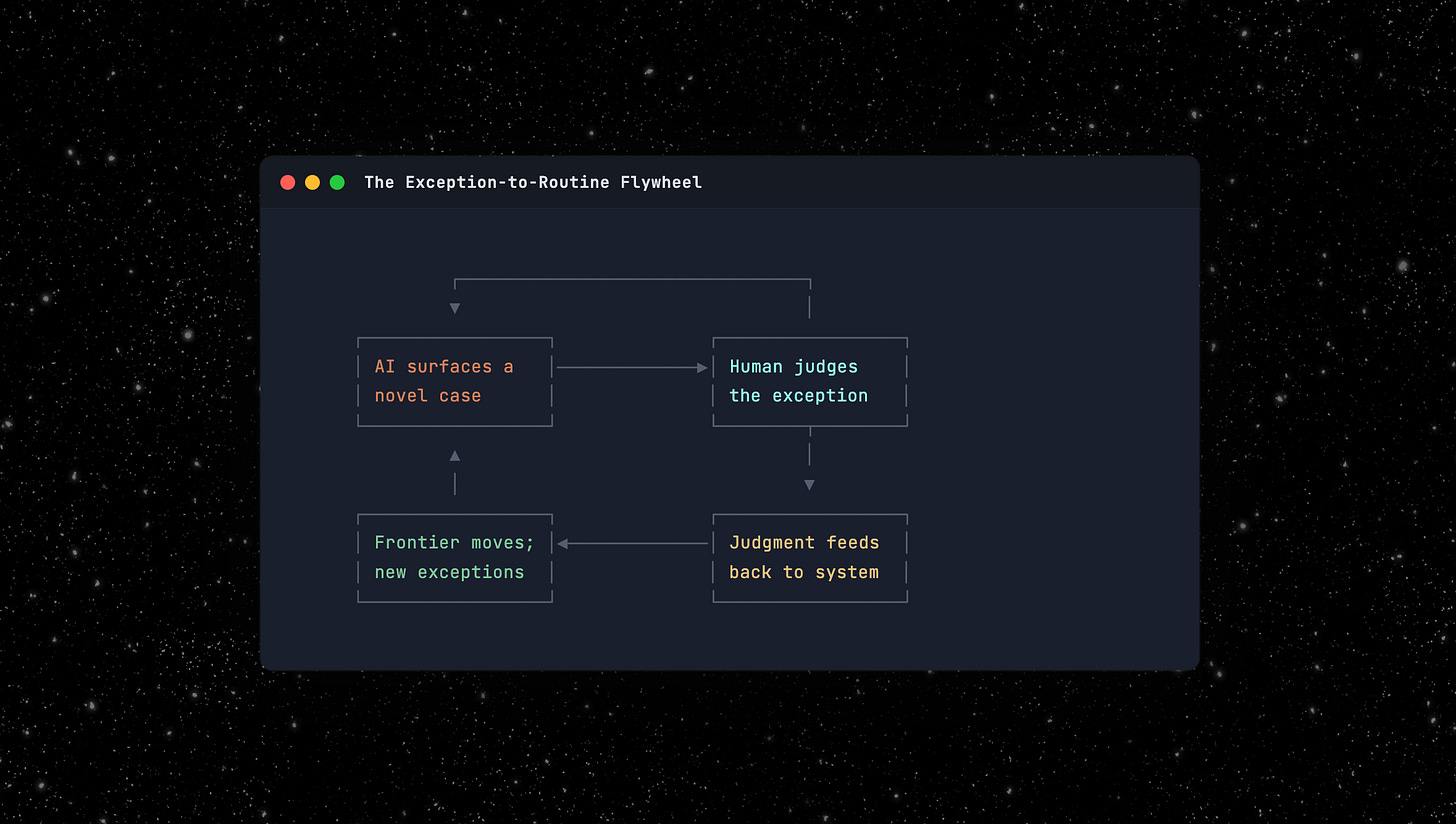

The exception-to-routine flywheel

AI surfaces a genuinely novel case. A human makes a judgment call. That judgment gets fed back into the system so next time it’s routine, not exceptional. The exception pool shrinks, the system compounds, and a competitor starting fresh has to build that history one judgment call at a time.

But the exception pool doesn’t shrink to zero. The frontier moves. Automate today’s exceptions and you can take on risk classes you couldn’t touch before. That generates new exceptions at a higher level of complexity. The human doesn’t become unnecessary. The human operates on increasingly valuable problems.

Building for the human functions

In practice this means surfacing exceptions with full context, not just “this case is unusual” but why it’s unusual, what similar cases looked like, and what happened when someone decided differently. Tightening the decision owner’s feedback loop means connecting outcomes back to the specific decisions that produced them, in real time, not in a quarterly report. The trick is engineering productive friction: confidence scores, mandatory holds on high-stakes decisions, escalation paths that route the right cases to the right humans.

Products that dump 500 AI-flagged items on a human are treating the human as a pattern matcher, a function AI should handle. Products that surface five genuine exceptions with full context are routing work to the exception decider and the decision owner, the functions where humans are irreplaceable. That’s the design problem worth solving and where the real value will accrue.

The unbundling

The 150-pound computer isn’t being replaced. It’s being unbundled. Every company that employs humans to orient and decide is now answering the same question, whether it knows it or not: which functions in the bundle are you absorbing, which are you augmenting, and which are you designing around as permanently human?

Get that decomposition wrong and you’ve built a fast system nobody trusts, or an expensive human doing work a model should handle. Get it right and you’ve found something better than automation: a system that converts today’s exceptions into tomorrow’s routine, freeing the 150-pound computer to work on problems it couldn’t reach before.

My name is Matt Brown. I’m a partner at Matrix, where I invest in and help early-stage fintech and vertical software startups. Matrix is an early-stage VC that leads pre-seed to Series As from an $800M fund across AI, developer tools and infra, fintech, B2B software, healthcare, and more. If you’re building something interesting in fintech or vertical software, I’d love to chat: mb@matrix.vc

The OODA loop directly inspired many of the frameworks that startups have used for years, from Steve Blank’s Customer Development methodology to Eric Ries’s Lean Startup concept.